Compute-in-Memory

Boosting memory capacity and processing speed

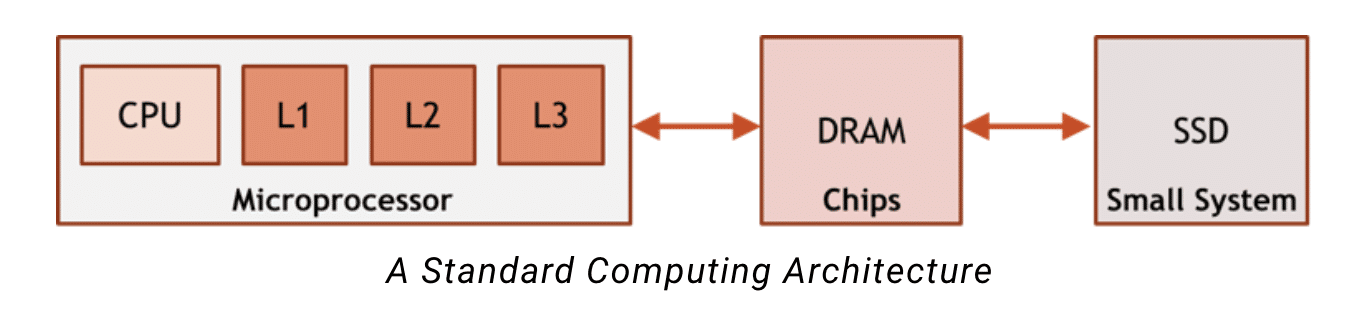

Today’s most common computing architectures assume that the full memory space is too large to fit on-chip near the processor, and that it is unknown what memory is needed when. To address the space issue and uncertainty, these architectures build a hierarchy of memory. The memory hierarchy near the CPU is small, fast and can support high frequency of use, while DRAM and SSD are large enough to store the bulkier, less time-sensitive data.

Compute-in-memory uses different assumptions: we have a large amount of data to access and we know exactly when it is needed. These assumptions are possible for AI inference applications because the execution flow of the neural network is deterministic – it is not dependent on the input data like many other applications.

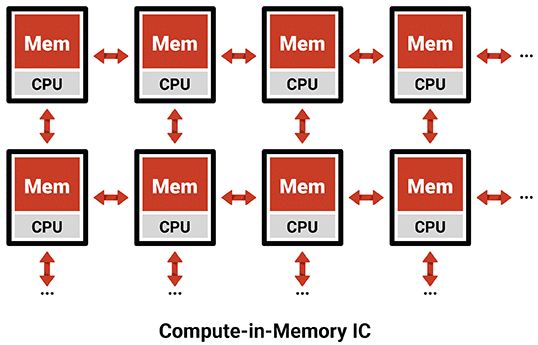

Using that knowledge, we strategically control the location of data in memory, instead of building a cache hierarchy to cover for our lack of knowledge. Compute-in-memory also adds local compute to each memory array, allowing it to process the data directly next to each memory. By having compute next to each memory array, we have an enormous memory with the same performance and efficiency as L1 cache (or even register files).