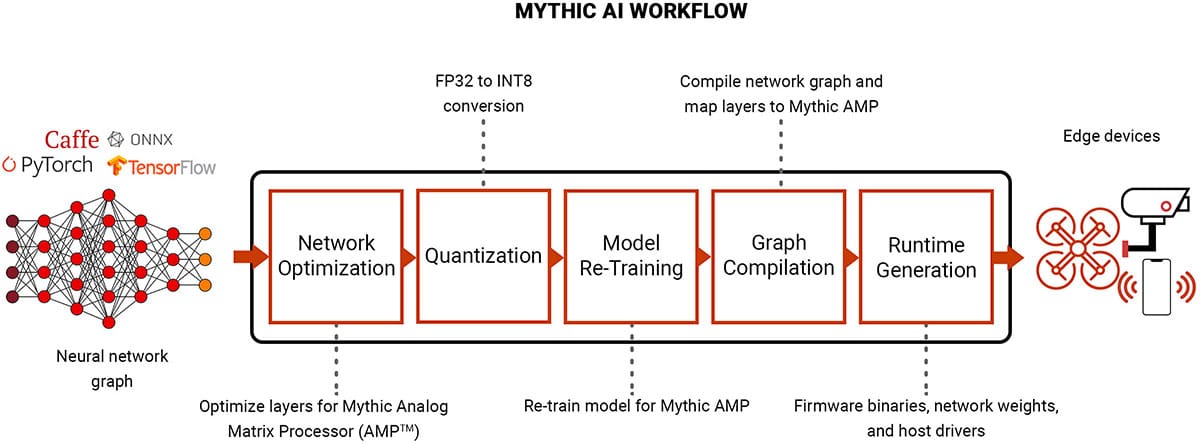

Mythic AI Workflow

Deploying DNN models to the Mythic APU™

Mythic’s platform delivers category-leading performance, power, and on-chip model capacity in a cost-effective form factor. To leverage this compute, Mythic’s software stack optimizes and compiles trained neural networks using a flow that is familiar and easy to use for developers. We build on existing ecosystems, like PyTorch, for the front-end to ensure frictionless integration with standard training flows.

The software then runs through two stages: optimization and compilation.

- The Mythic Optimization Suite transforms the neural network into a form that is compatible with analog compute-in-memory, including quantization from floating point values to integer 8-bit.

- The Mythic Graph Compiler performs automatic mapping, packing, and code generation.

- The final result is a packaged binary containing everything that the host driver needs to program the Mythic APU™ and run neural networks in a real-time environment.

MYTHIC OPTIMIZATION SUITE

The first stage of the Mythic AI workflow is an optimization of a trained neural network. The quantization flow converts 32-bit floating point weights and activations — the standard numerical format in training — to 8-bit integers, which is essential for effective deployment at the edge. Quantization is a major pain point for customers with high accuracy requirements. Our simple flow runs after training and performs conversion to an analog representation of 8-bit (ANA8). The resulting accuracy is comparable to digital 8-bit quantization which is typically deployed in power-constrained edge applications.

We provide retraining flows for applications with strict accuracy requirements and/or more aggressive performance and power targets. Quantization-aware and analog-aware retraining builds resiliency into layers that are sensitive to the lower bit-depths of quantization and to analog effects. For aggressive performance and power targets, certain layers can be pushed to 4 bits and below without a significant drop in accuracy.

MYTHIC GRAPH COMPILER

The second stage of the Mythic AI workflow generates the binary image to run on our Mythic APU. We handle the conversion from a neural network compute graph to machine code through an automated series of steps including mapping, optimization, and code generation. Powerful hardware architecture elements, including many-core processing, SIMD vector engines, and dataflow schedulers, are all leveraged automatically by the graph compiler. Even the host driver is simple and pain-free, with input/output and memory transfers handled behind the scenes. We provide compiler support for high compute intensity operations before, after, and in-between neural network layers with our array of processors and SIMD vector engines.