M1076 Analog Matrix Processor

The M1076 Mythic AMP™ delivers up to 25 TOPS in a single chip for high-end edge AI applications.

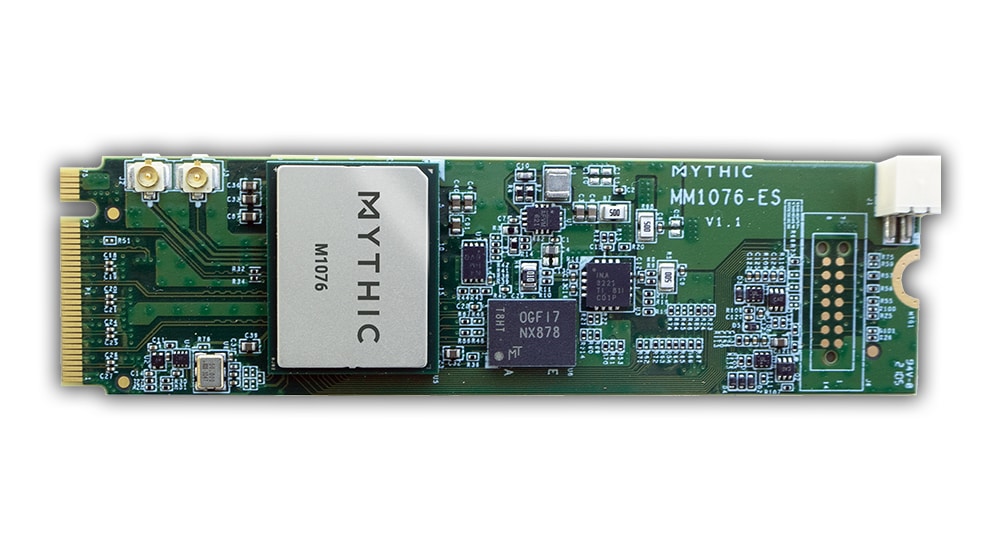

Read MoreMM1076 M.2 M Key Card

The MM1076 M.2 M key card enables high-performance, yet power-efficient AI inference for edge devices and edge servers.

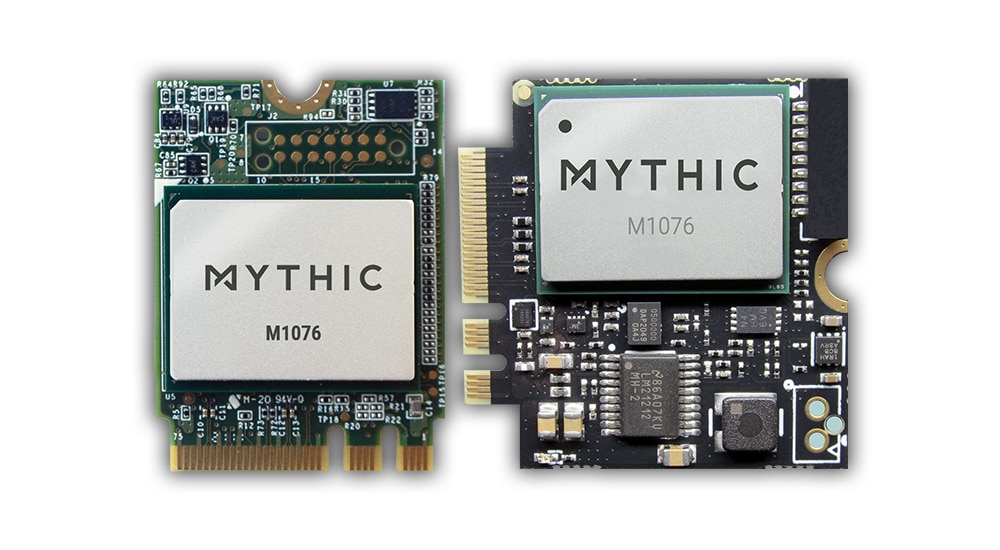

Read MoreME1076 M.2 A+E Key Card

The ME1076 M.2 A+E key card enables high-performance, yet power-efficient AI inference for edge devices and edge servers.

Read More